If you’re not too familiar with the term “crawl budget,” it’s basically how often search engines like Google send out “spiders” or “bots” to crawl and index websites.

And while this might not seem like something you need to worry about, if you want your website to rank well on search engines, it’s actually a pretty big deal.

Crawl budget is a very important but often overlooked aspect of SEO. Here’s what you need to know about it.

What is a website crawl budget?

A website crawl budget is the amount of time and resources that search engines, such as Googlebot, allocate to crawling and indexing a website’s pages. The crawl budget helps search engines prioritize which pages to crawl and index, as they cannot crawl every page on the internet all the time.

A good rule of thumb is that a small website (<1000 pages) can probably be crawled in its entirety during each visit, while a larger website (>10,000 pages) may need to be crawled over multiple visits, or may require specialized crawling techniques such as priority crawling.

Factors Affecting Crawl Budget

Several factors influence how much crawl budget a website receives:

- Website size: Larger websites with more pages generally require more crawl budget to keep up with changes and ensure all pages are indexed.

- Link popularity: Websites with more backlinks from high-authority sites are more likely to receive a higher crawl budget, as they are considered more important and likely to be relevant to search queries.

- Server response time: Efficient server response times allow search engines to quickly crawl and index pages, reducing the overall crawl budget needed.

- Indexing priority: Websites can prioritize specific pages for crawling by using robots.txt directives and meta robots tags.

Signs of Crawl Budget Issues

If you suspect your website’s crawl budget is limited, you may notice these indicators:

- Slow indexing of new pages: Newly created pages take longer than usual to appear in search results.

- Low page indexing: A significant portion of your pages remains unindexed.

- High crawl error rates: Errors like 404 Not Found or server overload indicate crawl issues.

Optimizing Crawl Budget

To optimize your website’s crawl budget, consider these strategies:

- URL pruning: Remove unused or duplicate pages to reduce the number of resources needed for crawling.

- Robots.txt file optimization: Clearly define crawlable areas and restrict access to non-essential pages.

- Sitemaps: Submit a well-structured sitemap to search engines, providing them with a roadmap of your website’s contents.

- Server optimization: Ensure your server can handle increased traffic and response times efficiently.

- Content quality and relevance: Create high-quality, informative content that is relevant to search queries to increase crawl demand and prioritize page indexing.

The hosting server and the crawl capacity limit work together

The Googlebot must establish a calculated limit on the number of parallel connections when crawling your site so that it does not overload your servers. This is to ensure that all important content is included in the crawl while avoiding server issues.

The maximum amount of crawls your website can handle will depend on a few conditions:

- Server response to the crawling session: If the site responds quickly, Googlebot can crawl more connections. If the site slows down when the crawling is searching the website resources or has server errors, then Googlebot will crawl less.

- The site owner has set a limit in Search Console: If website owners want, they can limit Googlebot’s crawling on their site. Note that automatically increasing limits won’t increase the crawling budget.

- Google’s crawling limits: Google has a ton of server power, but we can’t assume it’s infinite. We need to be aware of how much we’re using and conserve where possible.

Why is a website crawl budget important for SEO?

When it comes to SEO, the budget is important because if search engine spiders notice that your server power is reaching a stress moment because of it, this can lead to slower page loading times and even downtime.

On the other hand, if search engine spiders don’t visit all the pages on your website, they may miss important content and key phrases that could be used to improve your website’s ranking in search results.

How does the crawl budget affect your website SEO?

Crawl budget is a value measured on kilobytes or megabytes that results in the number of times a search engine spider can visit your website in a given period of time. Your website SEO can be affected positively or negatively depending on your crawl budget.

If your crawl budget is too low, your website may not be indexed by the search engines as often as you would like, which can hurt your rankings. On the other hand, the crawl budget is intrinsically related to three major things

- The type of website: How frequent is the content of the updated

- Technical performance of the site: on site level and on server level

- Google’s own discretion of compute resources assigned to the website.

How does Google calculate the Crawl Budget for each website?

Google fingerprint content as it crawls it. This means the content that is crawled and indexed for the first time will have a “signature” assigned to it, and it will be used to compare to future versions of the same content. This allows Google, among other things:

- Determine if changes have been made.

- Should that content be included in the search results?.

- Duplication.

- Frequency of changes made.

- Frequency and speed of crawling.

- Crawl rate set by the website admin

- Server capacity, and overall website health.

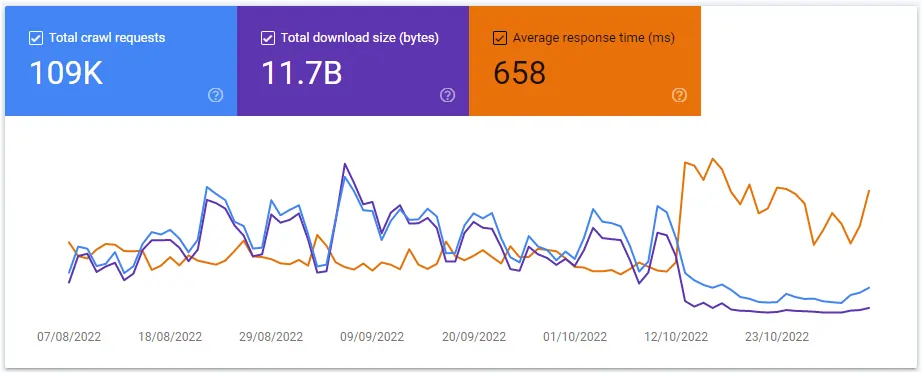

Where can you see your website crawl budget

You can see your website’s crawl budget in Google Search Console. To do this, log into your GSC account and go to the Settings > Crawl Stats report. Here, you’ll see how many times Googlebot has crawled your site in the past 90 days, as well as the average time per day that it spends on your site.

If you’re not happy with your website’s crawl budget, apply the tips mentioned about to improve it.

Note: There is nothing you can do to tell google to increase your crawl budget. Because the algorithm is prepared to increase by itself based on the conditions mentioned above.

The shocking truth about crawl budget

The crawl budget has nothing to do with content quality. You can have fantastic content on your pages, that might probably rank very well on Google, but that content never changes. The crawl ratio is more about the number of times (how often) Google crawls your website based on the frequency of its changes or updates.

Crawling frequency depends on many factors, not just the quality of your content. So don’t ignore the crawl budget, but also keep focusing on creating high-quality content for your audience.

7 Crawl budgets facts:

- You should use it to your advantage.

- It’s quite small.

- The crawl budget is often overlooked by webmasters.

- It is not possible to fix it without fixing the website’s architecture.

- Crawl budget is a very important but often overlooked aspect of SEO.

- By optimizing for it, you can improve your website’s rankings and overall performance in search engines.

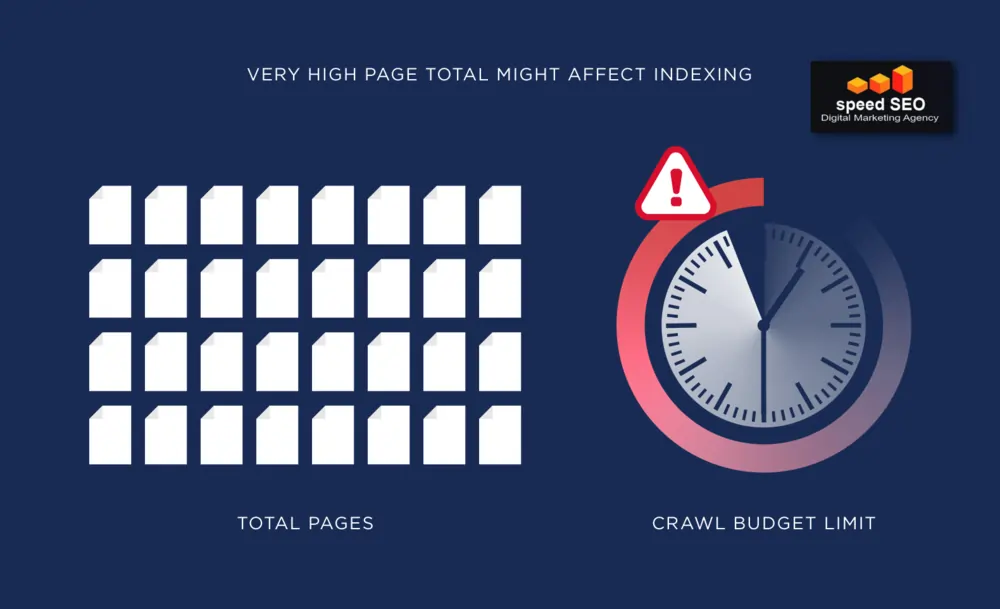

Which website size should pay more attention to its crawl budget?

Large websites are the first to worry about reaching the crawl limit. Search engines like Google can only crawl a certain number of pages on your site at any one time, and larger sites have more pages that can potentially be crawled.

This means that important pages may not be found by the search engines if you don’t optimise your website to make good use of the crawl budget.

To help you understand this, here’s an example of the size of a website:

- Under 10000 URLs: Small website

- Over 10000 up to about 500000 URLs: Medium website

- Over 500000 URLs: You should start contenting us to make better use of your crawl budget, ASAP!

🏆 Pro tip: The crawl budget itself is not your problem, your problem is your server setup. It is there where you optimize your website crawl budget.

But smaller websites should also consider their crawl budget and how it may impact their SEO efforts. Crawling too many low-quality pages can prevent important pages from being discovered and indexed. It’s important to regularly audit your website and remove any unnecessary or low-quality pages that may be using up the crawl budget without providing any real value.

Because the sooner the search engine discovers your best pages, the better it is for your rankings.

Pay Attention to the Crawl Window

The crawl window is the moment when the algorithm went over your website to crawl it. This window is important because it will let you know when is the best time to post your new content so the crawler can see it and index it.

🏆 Pro tip: enable your sitemap to be updated as soon as a new URL is created.

10 Crawl budget pitfalls that you should not fall into

It is demostrated above that crawl budget is an important aspect of website optimization that can significantly impact your search engine ranking. Unfortunately, there’s a lot of misinformation out there about the crawl budget. For this reason, too many people fall into these 10 common traps:

1. Excessive Low-Value Pages:

Avoid creating or maintaining a large number of low-value pages, such as duplicate content, session-specific pages, or pages with irrelevant content. These pages consume crawl budget without providing significant value to search engines, potentially hindering the crawling of more important content.

2. Redirect Chains and Errors:

Unnecessary redirect chains can confuse search engine crawlers and waste crawl budget. Ensure that redirects are used only when necessary, and resolve any crawl errors promptly to avoid diverting crawlers from more valuable pages.

3. Crawling Unstable Servers:

High server response times can frustrate search engine crawlers and lead to them abandoning your website. Ensure that your server infrastructure can handle the expected traffic and provide stable response times to optimize crawling efficiency.

4. Lack of Sitemaps:

Sitemaps provide search engines with a clear roadmap of your website’s content, enabling them to prioritize crawling high-value pages. Submit a well-structured sitemap to search engines to guide their crawling activities effectively.

5. Neglecting Robots.txt Management:

The robots.txt file instructs search engine crawlers which pages they should access and which they should avoid. Properly define crawlable and non-crawlable areas in your robots.txt file to prevent unnecessary crawling and resource consumption.

6. Focusing on Minor Content Changes:

Small or insignificant changes to your website’s content may not warrant immediate crawling by search engines. Focus on making substantial updates that enhance the overall quality and relevance of your content to attract more crawling effort.

7. Ignoring Duplicate Content Issues:

Duplicate content can confuse search engines and potentially penalize your website. Identify and eliminate duplicate content to ensure that your website’s unique content receives the attention it deserves.

8. Failing to Monitor Crawl Performance:

Regularly monitor your website’s crawl performance using tools like Google Search Console to identify any crawl budget limitations or inefficiencies. Analyze crawl errors, identify problematic pages, and implement appropriate optimization strategies.

9. Ignoring Link Quality and Authority:

Link popularity plays a crucial role in determining crawl budget allocation. Build high-quality backlinks from reputable sources to enhance your website’s authority and attract more crawling effort from search engines.

10. Overlooking Crawl Rate Optimization:

While increasing crawl budget is generally beneficial, overdoing it can overwhelm your server and lead to crawling errors and performance issues. Optimize crawl rates based on your website’s infrastructure and traffic patterns to maintain a healthy balance.

Conclusion

Here’s a breakdown of the key points:

- Crawl budget is the amount of resources Googlebot allocates to crawl and index your website’s pages. A healthy crawl budget ensures that Googlebot can effectively crawl and index your most important content.

- Several factors influence crawl budget:

- Site structure: A clear site structure with well-organized navigation makes it easier for Googlebot to find and crawl your content.

- Content quality: High-quality, unique content that is relevant to search queries attracts more crawl effort.

- Link popularity: Backlinks from reputable websites signal to Googlebot that your content is valuable and worth crawling.

- Server performance: Fast server response times allow Googlebot to crawl pages quickly and efficiently.

- To optimize crawl budget:

- Submit a sitemap to Google Search Console: This provides Googlebot with a roadmap of your website’s content.

- Use robots.txt to restrict access to non-essential pages: This prevents Googlebot from wasting resources on pages that don’t contribute to search engine rankings.

- Monitor crawl errors and fix them promptly: Errors can prevent Googlebot from crawling important pages.

- Regularly update and maintain your website: This keeps search engines informed of the latest content.

By following these tips, you can ensure that your website receives the optimal crawl budget and gains better visibility in search results.

If you aren’t sure how the crawl budget of your website is affecting your search engine visibility, you can contact us to help you optimise it and rank your business better in the search engines results.